According to Gartner surveys, 59% of organizations do not measure data quality. This has created a fundamental blind spot for AI as data silos are fragmenting training datasets and preventing models from learning comprehensive patterns, annotation processes lacking domain expertise are introducing systematic labeling errors, and lack of accountability has led to quality problems that are compounding across AI model development training cycles.

This blog explores the data quality issues and strategies to mitigate these challenges at the organizational level.

Table of Contents

5 Core Training Data Quality Issues in AI Model Development

#1 Data Silos

When information is stored in isolated systems across departments or teams, it creates gaps, overlaps, and inconsistencies. These data silos have emerged from common organizational dynamics:

- Departmental autonomy, where business units operate independently with their own goals, budgets, and decision-making authority, has led departments to select software and platforms without considering enterprise-wide integration needs.

- Communication gaps exist between functions where teams lack regular interaction, operate in separate locations, use different terminology, and maintain distinct processes. This has resulted in isolated data collection methods, leading to incompatible formats across the organization.

- Legacy systems with outdated technologies like older operating systems, ERPs, or CRMs remain incompatible with modern systems, making integration difficult and creating isolated data stores that hinder unified datasets.

- Mergers and acquisitions have introduced disparate systems, databases, and data architectures from different organizations, creating complex integration challenges, as acquired entities often bring legacy systems and data formats that resist standardization.

- Security and compliance controls are establishing strict data access restrictions and role-based permissions, which, while necessary for regulatory compliance (GDPR, HIPAA, CCPA), have unintentionally created isolated data repositories by limiting cross-departmental access essential for AI training datasets.

Key Indicators of Data Silos:

- Conflicting dashboards showing different metrics across teams

- Manual workarounds where analysts are spending most time reconciling data instead of building models

- Duplicate datasets with no clear ownership or version control

- Cross-functional reporting delays blocking AI model development

- Inconsistent data formats preventing automated processing

- Integration challenges limiting data unification capabilities

#2 Data Annotation Challenges

Unlike other data quality issues that might affect model accuracy gradually, annotation problems directly encode errors into model behavior. The core annotation challenges that are undermining AI model performance include:

- Domain Expertise Gaps: When annotators lack specialized knowledge in the relevant field—whether medical imaging, legal document analysis, or financial risk assessment—they make systematic labeling errors that become embedded in model training.

For instance, in chest X-ray analysis, a radiologist distinguishes pneumonia from pulmonary edema, while a general annotator might label both as generic “lung opacity” or misclassify one as the other based on visual similarity.

- Ambiguity in Data Interpretation: Without clear annotation guidelines and decision frameworks, different annotators are interpreting the same data differently. For instance, customer sentiment analysis has become inconsistent when one annotator mislabels data in AI training, such as “okay” as neutral, while another considers it positive. This interpretive variability is creating conflicting training signals causing hallucinations in AI models.

- Lack of Standardization: Organizations often begin annotation projects without establishing consistent labeling taxonomies, quality metrics, or validation processes. Teams use different annotation tools, follow different procedures, and apply different criteria, resulting in datasets that contain systematic inconsistencies across different annotation batches or time periods.

- Scalability Limitations: As AI models require increasingly large training datasets, organizations are struggling to scale annotation processes. This has led to compromised quality controls and inadequate validation processes.

#3 Inconsistent Processes and Workflows

Without standardized workflows, data quality becomes dependent on individual judgment and attention to detail—factors that don’t scale to enterprise requirements.

For instance, the marketing team may classify a “lead” as anyone who downloads a whitepaper, while the sales team only considers someone who requests a product demo as a qualified lead, resulting in conflicting conversion metrics and unreliable forecasting models. These process inconsistencies have led to repetitive errors that are becoming embedded in training datasets, creating variations that degrade AI model performance.

#4 Inaccurate or Outdated Data

When organizations fail to maintain data accuracy, AI models are learning from information that no longer reflects operational reality.

Key Indicators of Inaccurate/Outdated Data:

- Outdated information where customer preferences, market conditions, or operational parameters no longer reflect current business reality.

- Data entry errors from manual input mistakes, system malfunctions, or integration failures that are introducing systematic inaccuracies across datasets.

- Lack of metadata context where data assets exist without definitions, lineage, or ownership documentation, making it impossible to assess quality or apply consistent standards.

- Misclassified records where data is being tagged with incorrect categories or business definitions, leading to flawed machine learning model training.

- Duplicate entries across systems creating redundant records for the same entities without clear ownership or deduplication processes.

- Inconsistent values for identical fields across different systems, such as conflicting customer addresses in CRM versus ERP platforms.

#5 Lack of Accountability & Data Governance

When organizations lack formal governance policies, no single entity is held accountable for data quality outcomes leading to AI model performance crisis.

- Distributed accountability where responsibility for data quality spans multiple teams without clear ownership, has caused different business units to define metrics and labels inconsistently, which is creating conflicting signals in training datasets.

- Regulatory compliance adherence for regulations such as General Data Protection Regulation (GDPR) or Health Insurance Portability and Accountability Act (HIPAA) has become inconsistent across departments without centralized governance, creating fragmented data handling practices that are exposing organizations to legal violations.

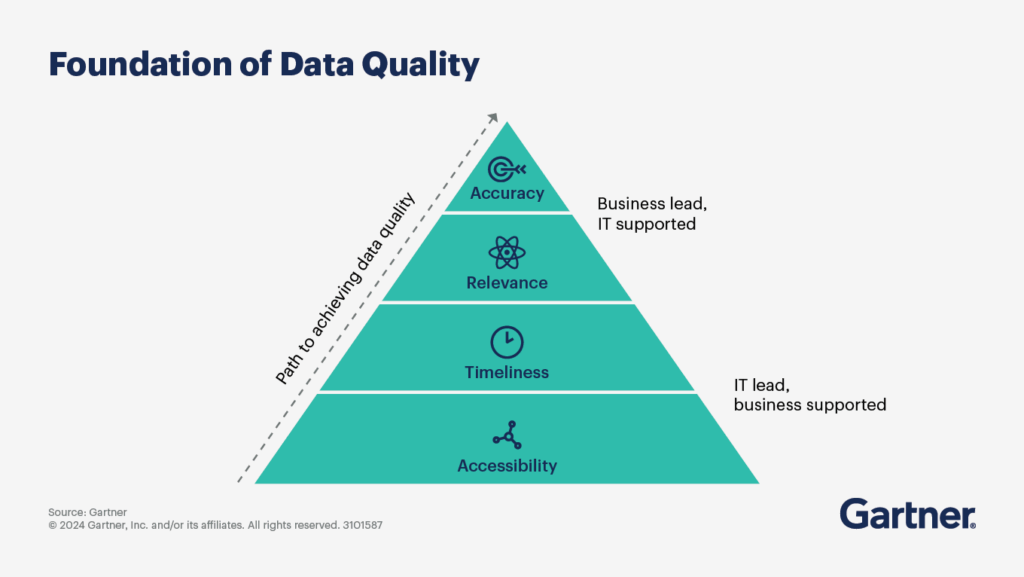

The Strategic Imperative is Clear: Organizations facing AI model performance crises due to poor training data quality are losing competitive edge and market share to those leveraging data-driven decision making. The solution requires immediate executive action across four critical areas:

- Comprehensive data audits that identify and quantify quality gaps across all systems,

- Standardized data governance frameworks that establish clear accountability and consistent practices enterprise-wide,

- Specialized data annotation services that provide the domain expertise and scalability most organizations cannot develop internally,

- Consistent monitoring systems that detect and prevent quality degradation in real-time.

Organizations that are implementing these systematic approaches are transforming from ad hoc data management to strategic data governance, enabling them to build reliable AI systems that drive sustainable competitive advantage and market leadership.